End-to-end autonomous drone navigation stack for GPS-denied flight in caves, mines, tunnels, and industrial interiors. Covers every layer from sensor fusion and GPU mapping to 6-level autonomy management, Smart RTL, MAVLink flight control, a custom binary comms protocol, and a cross-platform ground station — all designed, architected, and implemented solo.

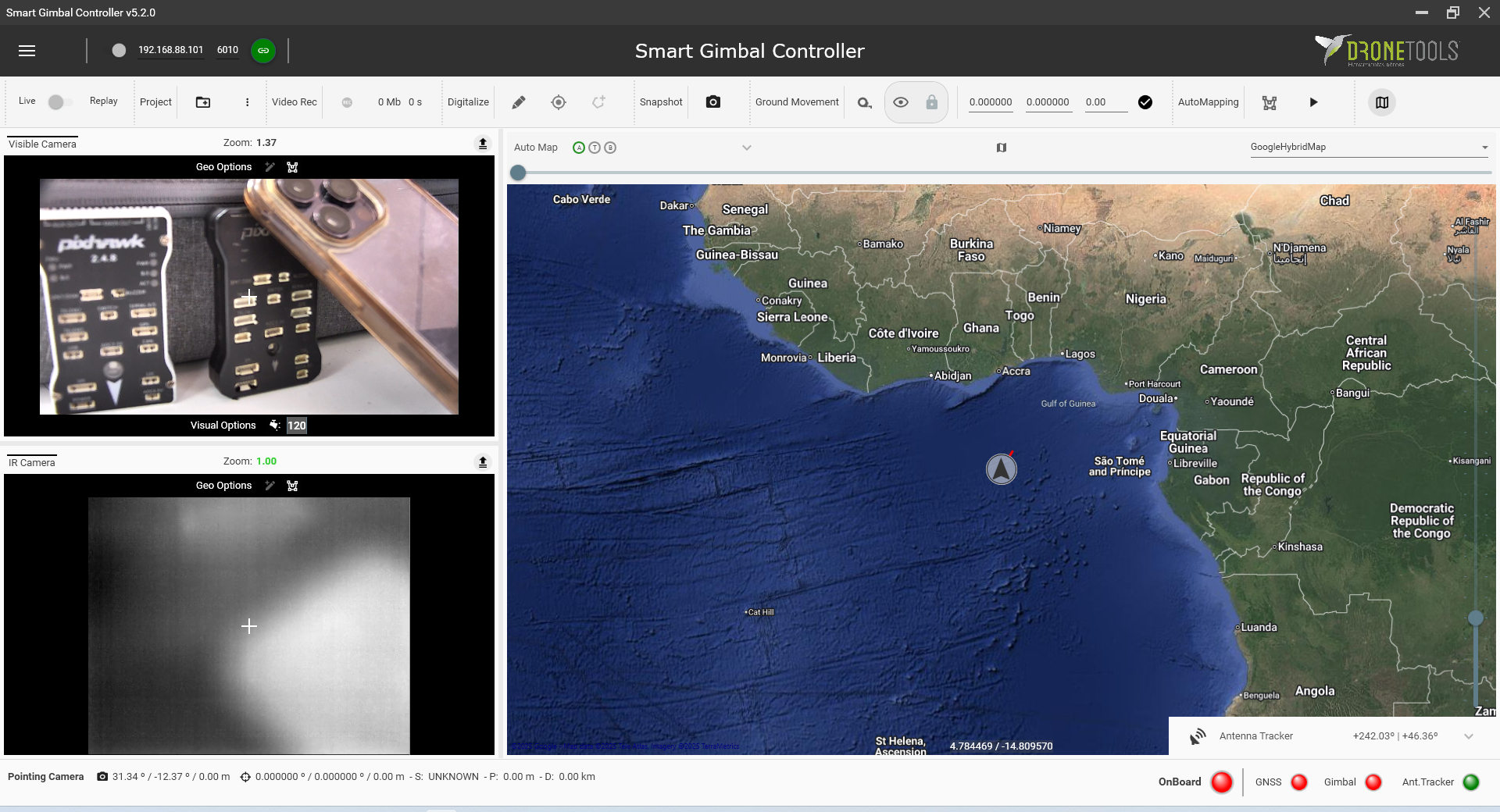

Three-pillar architecture: autonomous flight stack, multi-session relocalization for repeat inspections, and a cross-platform Qt6 GCS (Linux · Windows · Android)

Always-on Smart RTL — weighted A* with clearance-aware costs, 0.5 s replan cycle, dynamic prefix + cached static corridor; immediately executable on comms loss

6-level autonomy hierarchy (Full Exploration → Failsafe) with automatic degradation, independent safety watchdog, ESDF-filtered velocity gate, and stream-health failsafe trigger

Custom LION binary UDP protocol with packet fragmentation/reassembly — streams 50 M-point clouds to GCS over constrained wireless links with bi-directional command plane

Multi-session relocalization: Scan Context retrieval + ICP/NDT refinement loads prior map bundles for repeat-inspection workflows without GPS or ground truth

Autonomy Depth

Sensor fusion → SLAM → planning → safety → FC execution in a single deployable stack

GCS Rendering

Up to 50M points live on constrained hardware over custom binary protocol

Operational Resilience

Runs under comms loss, degraded sensors, and GPS-denied environments by design

ROS 2 Humble

C++17

FAST-LIO

nvblox CUDA ESDF

GTSAM iSAM2

Scan Context

Smart RTL

6-Level Autonomy

Custom Binary Protocol

Qt6 Cross-Platform GCS

MAVLink / ArduPilot

WebGPU / WebGL2